Intro

Running Lighthouse and accessibility audits against every page on every test run sounds great in theory. In practice, it will wreck your CI pipeline. A single Lighthouse run launches a headless browser, loads the page, and performs dozens of audits across both desktop and mobile configurations. Each page takes several seconds per configuration. Multiply that by every page on the site, and you are looking at test runs that take many minutes just for Lighthouse alone, on top of the unit and integration tests.

For a modest site with 15 pages, that is roughly 2 minutes of Lighthouse audits. A site with 100 pages would be looking at closer to ten minutes, and plenty of production sites have hundreds or thousands of pages. That is not sustainable. You could throw hardware at the problem or parallelize the runs, but headless browsers are resource-hungry, and running multiple Lighthouse instances simultaneously tends to skew results since they compete for the same CPU and memory.

So what do you do? You could skip these audits and hope for the best. You could run them only on specific pages you handpick. Or you could do something a little more interesting: test a subset of pages on each run, and let the passage of time give you full coverage.

Spot-Check Testing

I’ve been calling this approach spot-check testing. The idea is straightforward. On each CI run, you select a small number of pages to audit. Over enough runs, every page gets tested. You get the coverage benefits of exhaustive testing without the time cost.

This isn’t a new concept in the broader testing world. Meta published research on their Predictive Test Selection system, which uses ML models to select a subset of regression tests per code change. Google has published similar work on test selection algorithms (evaluating strategies that use recent test execution history to decide which tests to rerun) to manage CI at massive scale. The core insight is the same: you don’t need to run everything every time if you are smart about what you pick.

My version is simpler than what Meta and Google do. No ML models and no dependency graphs. Just a shuffle algorithm and a few heuristics to make it work in practice.

Choosing a Sampling Strategy

There are several ways to decide which pages get tested on a given run. Each has tradeoffs worth considering:

| Strategy | How it works | Pros | Cons |

|---|---|---|---|

| Random | Shuffle all pages, pick N | Simple; good variance across page types; catches unexpected regressions | Pages will be revisited several times before full coverage is achieved |

| Sequential | Step through pages in order, N at a time | Guarantees full coverage in fewest runs | Ordered page lists may cluster similar pages (e.g., alphabetically similar slugs often have similar templates), delaying coverage of distinct page types |

| Priority-Weighted | Assign weights by traffic or importance, sample proportionally | High-value pages tested more often | Requires maintaining weight data; low-priority pages may go a long time without testing |

| Fixed Representative | Handpick a static list of pages | Deterministic; easy to reason about | Blind spots for pages not on the list; list goes stale as the site evolves |

I went with a randomized approach, but with a twist: the algorithm prefers untested pages over already-tested ones, which gives you much of the coverage speed of the sequential approach without the clustering problem. Previously failing pages also get retested every run, which borrows from the priority-weighted approach. In other words, you can mix and match these strategies as you see fit.

How It Works

Pages are selected with these steps:

- The homepage always gets tested. It’s the most visited page, and regressions there are the most visible.

- Any page that failed on the last run gets retested. This is the key nuance that makes spot-check testing reliable. It ensures failures are resurfaced on each test run.

- Fill remaining slots with randomly selected pages, preferring pages that haven’t been tested yet.

Here’s the actual TypeScript code from my accessibility test. On nicholaswestby.com (a portfolio site with 15 pages), RANDOM_PAGE_COUNT is set to 3, meaning each CI run tests 4 pages total: the homepage plus 3 randomly chosen ones.

const RANDOM_PAGE_COUNT = 3;

function selectPages(results: Pa11yResults, allPages: string[]): string[] {

const selected = new Set<string>(["/"]);

// Add failing pages first

for (const page of results.failing) {

selected.add(page);

}

// Remaining pages that aren't already selected

const remaining = allPages.filter((p) => !selected.has(p));

// Shuffle remaining

for (let i = remaining.length - 1; i > 0; i--) {

const j = Math.floor(Math.random() * (i + 1));

[remaining[i], remaining[j]] = [remaining[j], remaining[i]];

}

// Prefer pages not yet passed (untested)

const untested = remaining.filter((p) => !results.passed.includes(p));

const tested = remaining.filter((p) => results.passed.includes(p));

// Fill up to RANDOM_PAGE_COUNT additional pages

const candidates = [...untested, ...tested];

for (const page of candidates) {

if (selected.size >= RANDOM_PAGE_COUNT + 1) break;

selected.add(page);

}

return [...selected];

}Here’s what this process looks like from a conceptual standpoint:

With those 4 pages per run, roughly 27% of the site gets audited each time. Thanks to the untested-first approach, you will typically get full coverage within 5 CI runs.

Persisting State Across Runs

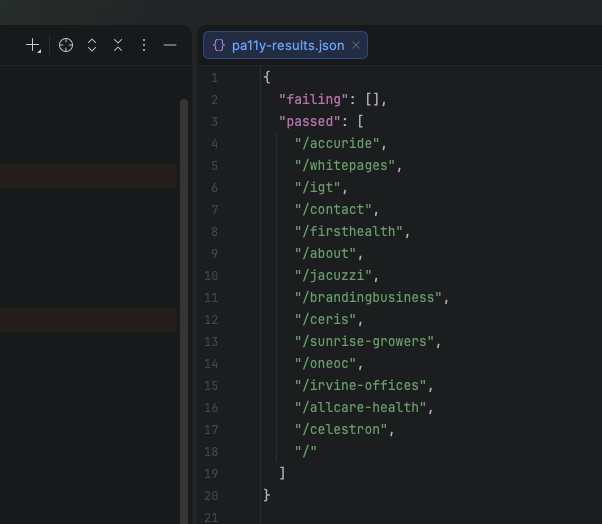

Results persist between CI runs via a committed JSON file that records successes and failures:

In text form, the file is structured like this:

{

"failing": [],

"passed": [

"/",

"/about",

"/contact",

"/celestron",

"/jacuzzi",

"/sunrise-growers"

]

}This file gets committed to the repository. When a test run starts, it loads this file to know which pages failed last time (and therefore must be retested) and which pages have never been tested (and should be prioritized). After the tests finish, the file gets updated:

// Remove pages from failing that now pass

const stillFailing = results.failing.filter(

(p) => !pagesToTest.includes(p) || newFailing.includes(p)

);

// Add newly failing pages

for (const p of newFailing) {

if (!stillFailing.includes(p)) stillFailing.push(p);

}

await saveResults({

failing: stillFailing,

passed: [...newPassed],

});A page only leaves the failing list when it gets retested and passes. A page that was previously failing but wasn’t selected for testing this run stays in the list. This prevents a failure from silently resolving itself just because the page wasn’t picked for a few runs.

Why Sampling Works Better Than Handpicking

While it is tempting to handpick a representative set of pages, there are a couple issues with that approach.

First, you have to decide which pages are “representative.” That decision becomes stale as the site evolves. A page that was once typical might become an outlier, or vice versa. You end up maintaining the page list as a chore rather than it maintaining itself.

Second, a fixed list creates blind spots. Pages not on the list never get tested. With sampling, every page will eventually get its turn, and the probability of catching a regression is a function of how many CI runs you complete rather than how good your initial selection was.

Lighthouse Configuration

Lighthouse uses the same sampling logic. Here are the score thresholds I settled on:

const THRESHOLDS = {

performance: 95,

accessibility: 100,

"best-practices": 100,

seo: 100,

};Each page gets audited in both desktop and mobile configurations, since performance characteristics can differ significantly between them. A page might score 100 on desktop but tank on mobile due to layout shifts or unoptimized images. Running both configurations roughly doubles the per-page audit time, but that is manageable when you are only testing a handful of pages per run.

The Full Test Infrastructure

To tie it all together, here’s the broader test setup. The Pa11y and Lighthouse tests both:

- Discover pages by parsing the sitemap XML from the build output.

- Serve the built site locally so the auditing tools can access it.

- Load the persisted results from the previous run.

- Select pages using the algorithm described above.

- Run the audits.

- Update and save the results file.

Page discovery uses the sitemap so that the test automatically picks up new pages as they are added. No test file changes needed when you publish a new page:

async function discoverPages(): Promise<string[]> {

const sitemapPath = join(DIST_DIR, "sitemap.xml");

const xml = await readFile(sitemapPath, "utf-8");

const locs = [...xml.matchAll(/<loc>([^<]+)<\/loc>/g)].map((m) => m[1]);

return locs.map((url) => {

const path = url.replace(/^https?:\/\/[^/]+/, "");

return path || "/";

});

}One thing worth noting is that these resource-intensive audits should run serially rather than in parallel, since concurrent headless browser instances compete for CPU and memory, which can skew results and cause timeouts.

When to Use This Approach

Spot-check testing makes sense when:

- Tests are expensive to run. Lighthouse, accessibility audits, visual regression tests, or anything involving a headless browser.

- You have many test targets. Pages on a site, API routes, or a large set of fixtures with extensive sample data.

- Full coverage on every run is impractical. Too slow for your CI time budget, or too resource-intensive to run reliably in parallel.

- You want eventual full coverage. Every target gets tested, just not all on the same run.

It does not replace deterministic tests. Your unit tests should still run every time. Your critical path E2E tests should still cover the happy path every time. This approach is for the expensive supplementary tests that help improve the quality of your code and content over time.

Better Tests, Less Waiting

You can get the coverage benefits of exhaustive testing without the time cost. Test a subset per run, whether through random sampling, sequential ordering, or something else. Persist results between runs so you know which pages are passing, which are failing, and which haven’t been tested yet. Always retest failures so that regressions cannot escape just because the page wasn’t selected this time around.

Once you set this up, it runs quietly in the background. You commit the results files, and each run picks up where the last one left off. Over time, every page gets covered and failures get caught and retested. If you want to go a step further, temporal ratcheting can ensure the quality bar rises on a schedule rather than just holding steady.